“I’ve been saying since November, we’re looking at three to nine months until a massive influx of zero-day vulnerabilities,” Adam says in this episode.Below are some of the ways defenders can incorporate AI into their security posture:

Check out the full Adversary Universe podcast episode below or tune in on Spotify and Apple Podcasts.

The most urgent topic discussed is the “vuln-pocalypse,” a term used to describe the projected massive influx of newly discovered vulnerabilities driven by AI-accelerated research.

The Looming Vuln-pocalypse

AI is reshaping the future of vulnerability research. Advanced AI models are capable of discovering vulnerabilities at machine speed, far faster than organizations can patch them. The consequences for defenders are enormous — and the opportunities for adversaries are vast.

As AI continues to rapidly mature and adversaries explore its use, the hosts explain, the pressure is on organizations to defend against their evolving tradecraft. Vulnerability discovery, exploitation, and patching are at the front and center of their concerns. And CrowdStrike is at the forefront of defense, as a founding member of Project Glasswing and participant in OpenAI’s Trusted Access for Cyber program.

Why? Adversaries are eyeing zero-days and weaponizing vulnerabilities at greater speed. In 2025, CrowdStrike Counter Adversary Operations observed a 42% year-over-year increase in the number of zero-days exploited prior to public disclosure, the 2026 Global Threat Report found. Chinese adversaries demonstrated they can consistently operationalize publicly disclosed exploits within days of the vulnerability’s release — in some cases, within two days.

There are two ways organizations typically prioritize patching. The first is prevalence, or how much of that vulnerability is in their environment. The second is severity, typically determined by CVSS score. This system breaks down when adversaries chain multiple vulnerabilities together. While they may appear low-priority in isolation, together they can open a door.

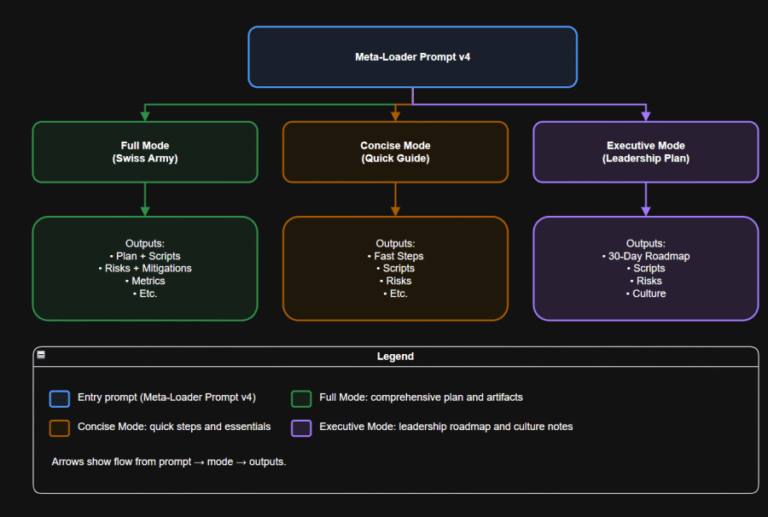

In the latest episode of the Adversary Universe podcast, CrowdStrike’s Adam Meyers, SVP of Counter Adversary Operations, and Cristian Rodriguez, Field CTO of the Americas, unpack some of the most pressing questions facing security teams today: What does AI-powered vulnerability research mean for the future of security operations? How will adversaries use it to their advantage?

Not an “End of the World” Situation

These observations contribute to CrowdStrike’s “community immunity,” Cristian says. “Every time an adversary burns through some new type of tradecraft, we’re crowdsourcing that telemetry.” All of this high-fidelity telemetry can then be used to identify that behavior in the future.

Patching Prioritization

While organizations are rightfully concerned about the rise in vulnerabilities, Adam and Cristian shared some key defensive takeaways to help them approach it.

Organizations are also advised to stay current on agentic AI news to understand this constantly evolving space and prepare their environments.

Zero Days Are Just the Beginning

Zero-days are alarming, but they’re not the catastrophe many assume they are. Even if an adversary uses a zero-day to gain access, Adam explains, they still need to do something with their access — move laterally, escalate privileges, identify targets, exfiltrate data. All of this post-exploitation activity is observable. If the adversary can be caught, they can be stopped.

1 2026 VulnCheck Exploit Intelligence Report

AI in the Defender’s Toolbox

- Agentic red teaming: Continuous red-team exercises can surface vulnerabilities in the environment before adversaries find them.

- AI vulnerability scanning: Use AI to proactively identify vulnerabilities in the development pipeline.

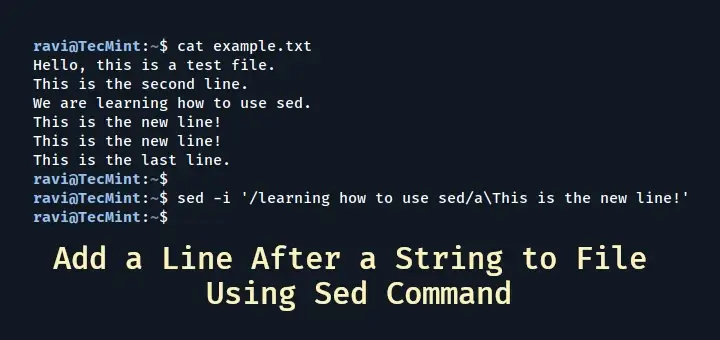

To explain why, he describes how vulnerabilities are traditionally found. One uses deep reverse engineering of the target to create an exploit. The other, more frequently used method of fuzzing involves putting random data into a program’s inputs until it crashes, then analyzing the results to see what is broken and potentially exploitable. AI can dramatically accelerate fuzzing by quickly triaging those results in far less time than a human could to find something useful.

More than 48,000 new CVEs were published in 2025.1 If AI accelerates discovery by even 10x, Adam points out, defenders could be looking at nearly half a million vulnerabilities requiring attention in the coming years. “That’s going to mean significant trouble,” he notes.

Threat actors are already using AI in their operations: The CrowdStrike 2026 Global Threat Report revealed an 89% year-over-year increase in attacks by adversaries using AI. FANCY BEAR, FAMOUS CHOLLIMA, and PUNK SPIDER are among the prolific threat actors weaponizing AI in their operations, using it to craft more convincing phishing lures, automate social engineering, and improve the speed of malicious content. While core tradecraft remains human-driven, AI acts as a force multiplier, helping adversaries increase efficiency. A tool in the eCrime space uses AI to conduct voice phishing attacks, which can now be executed agentically.

Additional Resources

Organizations must be more thoughtful in what they’re patching, how they’re patching, and when. Adam’s guidance is to patch based on what is actively being exploited in the wild; he references CISA’s Known Exploited Vulnerabilities catalog, which shares the vulnerabilities CISA is aware of being exploited on a weekly basis. Security teams don’t have to patch every vulnerability — they have to patch the vulnerabilities that present the greatest threat.