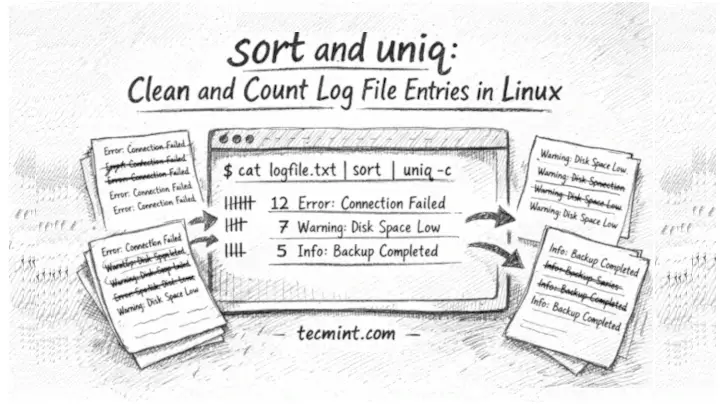

In this guide, we’ll show you how to use sort and uniq together to deduplicate, count, and summarize log file entries in Linux with practical examples.

You’re staring at a log file with 80,000 lines. The same error repeats 600 times in a row. grep gives you a wall of identical output. You don’t need to read all 80,000 lines – you need to know which errors occurred and how many times each one appeared. That’s exactly what sort and uniq solve, and most Linux beginners don’t know they go together.

What sort and uniq Actually Do

sort is a command-line tool that rearranges lines of text into alphabetical or numerical order. By default, it sorts A to Z. uniq is a command that filters out duplicate lines, but here’s the part that catches beginners off guard: uniq only removes duplicates that are next to each other. If the same line appears twice but with other lines in between, uniq won’t catch it.

That’s why you almost always run sort first. Sorting brings all identical lines together so uniq can collapse them. The two commands are independent tools, but they’re designed to work as a pair.

The core pattern is a pipe (|), which takes the output of one command and feeds it as input to the next:

sort filename.txt | uniq

If you want to count how many times each unique line appears, add the -c flag (short for “count“) to uniq:

sort filename.txt | uniq -c

The count appears as a number at the start of each line. The higher the number, the more times that line appeared in the original file.

1. Find Which IPs Are Hitting Your SSH Server

Failed SSH login attempts pile up in /var/log/auth.log on Debian and Ubuntu systems. This pipeline extracts the offending IP addresses and ranks them by frequency:

grep "Failed password" /var/log/auth.log | awk '{print $11}' | sort | uniq -c | sort -rn

The grep "Failed password" filters for failed login lines. awk '{print $11}' pulls out just the 11th field from each line, which is where the IP address sits in the standard auth log format. sort -rn at the end sorts numerically (-n) in reverse order (-r) so the highest counts appear first.

218 203.0.113.45

142 192.168.1.105

87 10.0.0.22

9 198.51.100.4

The left column is the count of failed attempts. The right column is the IP. A common mistake here is forgetting the final sort -rn – without it, output sorts alphabetically and you have to hunt for the worst offenders yourself.

Example 2: Summarize HTTP Status Codes in an Nginx Log

The standard Nginx access log records one request per line, with the HTTP status code in the 9th field, which tells you how your server is actually responding to traffic:

awk '{print $9}' /var/log/nginx/access.log | sort | uniq -c | sort -rn

Output:

8432 200

1201 404

312 301

89 500

14 403

200 means the request succeeded. 404 means the page wasn’t found. 500 means your server threw an error — if that number is high, something needs attention.

This is the kind of quick health check that takes 10 seconds and saves hours of log reading. If you see awk: cannot open /var/log/nginx/access.log, you may need to run the command with sudo.

3: Remove Duplicate Lines from Any Text File

The sort has a shortcut flag, -u (unique), that combines sorting and deduplication into a single step:

sort -u duplicates.txt

If duplicates.txt contained:

banana apple banana cherry apple

Output:

apple banana cherry

Every line appears exactly once, and the output is alphabetically ordered. This works on any plain text file, not just logs. The most common beginner mistake is expecting the original file to be changed — sort -u prints to the terminal by default.

To save the result to a new file, use.

sort -u duplicates.txt > cleaned.txt

4. Show Only the Lines That Are Duplicated

The -d flag on uniq shows only lines that appeared more than once, which is the opposite of removing duplicates:

sort access.log | uniq -d

Output:

GET /wp-login.php HTTP/1.1 GET /admin HTTP/1.1

This is useful for spotting suspicious repeated requests in web logs, or finding cron job output that’s running more times than it should. If nothing prints, all lines in your file are unique.

5. Sort and Count Errors in a Custom App Log

Say your application writes a log where each line starts with an error level like ERROR, WARN, or INFO. You want to count how many of each type appeared today:

grep "2025-04-18" /var/log/myapp.log | awk '{print $3}' | sort | uniq -c | sort -rn

Output:

512 ERROR

210 WARN

88 INFO

Adjust $3 to match whichever field holds the log level in your application’s format. If you’re unsure which field number to use, run:

awk '{print $1, $2, $3, $4}' yourfile.log | head -5

to preview the first few fields side by side.

The Most Useful Flags of sort and uniq

sort flags worth knowing:

-r– reverse the order (Z to A, or largest number first).-n– sort numerically instead of alphabetically (so 10 comes after 9, not after 1).-u– output only unique lines (combines sort + uniq in one step).-k– sort by a specific field, e.g.,-k2sorts by the second column.

uniq flags worth knowing:

-c— prefix each line with the count of occurrences.-d— print only lines that are duplicated.-u— print only lines that appear exactly once (opposite of-d).-i— ignore case when comparing lines.

Common Mistakes to Avoid

Running uniq without sort first is the most frequent error. If duplicate lines aren’t adjacent, uniq misses them entirely and your output still contains duplicates. Always run sort | uniq, not uniq alone.

The second common mistake is using sort -n when the first column isn’t a number. If your file has text in front of numbers, alphabetical sort (sort -rn on piped uniq -c output) works correctly because uniq -c always pads the count to a fixed width, making numerical sort work as expected.

Conclusion

You learned how sort and uniq work together to turn noisy log files into clean, countable summaries. sort groups identical lines side by side, uniq collapses or counts them, and flags like -c, -d, -u, -r, and -n let you ask precise questions without writing a script.

Right now, pick any log file on your system like /var/log/syslog, /var/log/dpkg.log, or even your bash history:

history | awk '{print $2}' | sort | uniq -c | sort -rn

and run the count pipeline against it. You’ll immediately see patterns you didn’t know were there.

Have you used sort and uniq to catch something unexpected in a production log? What was the most useful combination of flags you found? Tell us in the comments below.