- The goal of the Secure AI project is to fortify the security of AI-enabled systems and address the unique vulnerabilities and novel adversary attacks they face

- Its results were used to expand MITRE ATLAS®, a comprehensive knowledge base of adversary tactics and techniques targeting AI systems

- As a cybersecurity industry leader and a Center for Threat-Informed Defense Research Partner, CrowdStrike provided valuable expertise to drive the success of the Secure AI project

Every time we work with the Center for Threat-Informed Defense, it is in pursuit of the publicly funded Center’s mission: “To advance the state of the art and the state of the practice in threat-informed defense globally.”

In addition, researchers also identified a series of cutting-edge threats to AI-enabled systems:

As a Research Partner with the Center for Threat-Informed Defense, CrowdStrike contributed to the Secure AI project by providing expertise and anonymized information about incidents observed in real-world adversarial attacks.

As organizations deploy more AI-enabled systems across their networks, adversaries are taking note and using sophisticated new tactics, techniques and procedures (TTPs) against them.

The Secure AI Project

The need for continued innovation to fight these threats is paramount. MITRE ATLAS (Adversarial Threat Landscape for Artificial-Intelligence Systems), launched in 2021, is a framework modeled after the globally acclaimed MITRE ATT&CK® matrix to capture and define TTPs employed against AI-enabled systems, including those based on large language models (LLMs).

CrowdStrike’s commitment to cybersecurity research and innovation can be seen in the best-in-class protection provided by the AI-native CrowdStrike Falcon platform.

AI-enabled cybersecurity solutions face the same cyber threats as traditional systems, but they must also defend against a new class of sophisticated attacks designed to exploit the unique characteristics of AI.

- Acquire Infrastructure: Domains — Tactic: Resource Development

- Erode Database Integrity — Tactic: Impact

- Discover LLM Hallucinations

- Publish Hallucinated Entities

And we feel our responsibility in helping to defend against cybersecurity threats extends beyond providing our customers with cutting-edge protection. Our researchers and data scientists regularly publish their latest findings, sharing valuable information in an effort to improve defenses against adversarial attacks. Our team also regularly collaborates with the Center for Threat-Informed Defense, and the Secure AI project is the latest example of this partnership. This project focused on identifying and countering the unique threats faced by the latest AI-enabled systems through the enhancement of MITRE’s ATLAS framework.

- Privacy/Membership inference attacks

- Large Language Model (LLM) behavior modification

- LLM jailbreaking

- Tensor steganography

The MITRE Center for Threat-Informed Defense’s Secure AI project was launched to enhance the ATLAS framework to include the latest TTPs and vulnerabilities affecting AI-enabled cybersecurity solutions. As part of this, the Center recently launched the AI Incident Sharing Initiative, an industry resource to improve awareness of threats to AI systems by enabling contributors to receive and share anonymized data on real-world incidents impacting AI systems. Further, the Secure AI project has documented case studies of system vulnerabilities observed by industry partners as well as mitigations to address and document AI-related incidents.

- Generative AI Model Alignment

- Guardrails for Generative AI

- Guidelines for Generative AI

- AI Bill of Materials

- AI Telemetry Logging

Collaboration Is Key to Research and Innovation

Participants in the Secure AI Project identified four new techniques to add to the ATLAS framework:

You can read more about the Secure AI project here.

New mitigations were added to ATLAS as well, ensuring that organizations are not only aware of the latest threats targeting AI-enabled systems, they can also take measures to prevent those threats from succeeding. Mitigations identified as part of the Secure AI project include:

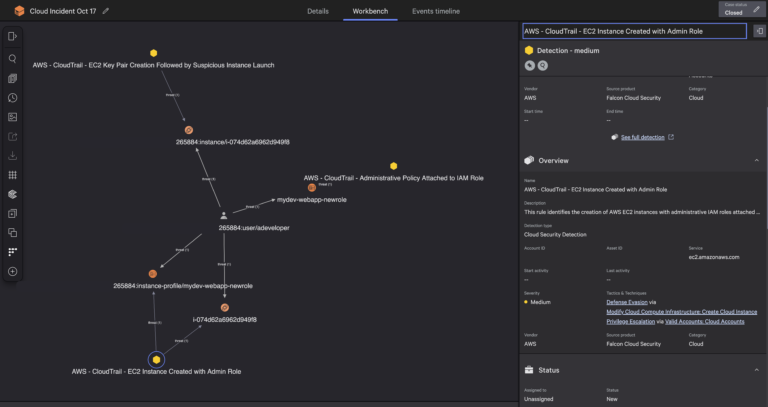

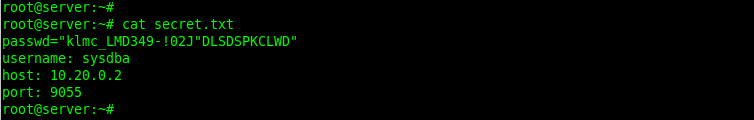

The nature of AI systems introduces new vulnerabilities for threat actors to exploit, leading to damaging and costly cyberattacks. For example, as part of the Secure AI project, one case study known as the “ShadowRay AI Infrastructure Data Leak” recorded unknown attackers exploiting a previously unknown vulnerability to access Ray production clusters and use them to mine cryptocurrency undetected for seven months — at a user cost for hijacked computers and compute time estimated to be nearly billion USD.